Information Warfare in the Age of AI: How Language Models Become Targets and Tools

A growing body of research highlights how large language models (LLMs) are being targeted in new forms of information warfare. One emerging tactic is called “LLM grooming” — the strategic seeding of large volumes of false or misleading content across the internet, with the intent of influencing the data environment that AI systems later consume.

While many of these fake websites or networks of fabricated news portals attract little human traffic, their true impact lies in their secondary audience: AI models and search engines. When LLMs unknowingly ingest this data, they can reproduce it as if it were factual, thereby amplifying disinformation through the very platforms people increasingly trust for reliable answers.

Engineering Perception Through AI

This phenomenon represents a new frontier of cognitive warfare. Instead of persuading individuals directly, malicious actors manipulate the informational “diet” of machines, knowing that the distorted outputs will eventually reach human users.

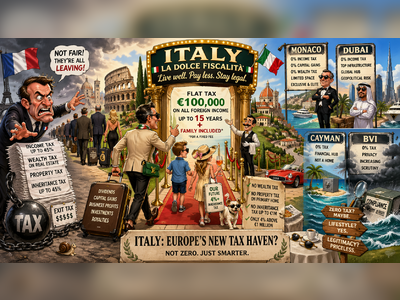

The risk extends beyond geopolitics. Corporations, marketing agencies, and even private interest groups have begun experimenting with ways to nudge AI-generated responses toward favorable narratives. This could be as subtle as shaping product recommendations, or as consequential as shifting public opinion on contentious global issues.

Not Just Adversaries — Also Built-In Bias

It is important to note that these risks do not stem only from hostile foreign campaigns. Every AI system carries the imprint of its creators. The way models are trained, fine-tuned, and “aligned” inherently embeds cultural and political assumptions. Many systems are designed to reflect what developers consider reliable or acceptable.

This means users are not only vulnerable to hostile manipulation, but also to the more subtle — and often unacknowledged — biases of the platforms themselves. These biases may lean toward Western-centric perspectives, often presented in a “friendly” or authoritative tone, which can unintentionally marginalize other worldviews. In this sense, AI is not just a mirror of the internet, but also a filter of its creators’ values.

Attack Vectors: From Prompt Injection to Jailbreaking

Beyond data poisoning, adversaries are exploiting technical weaknesses in LLMs. Two prominent techniques include:

Prompt Injection: Crafting hidden or explicit instructions that cause the model to bypass its original guardrails. For example, a model might be tricked into revealing sensitive information or executing unintended actions.

Jailbreaking: Users design clever instructions or alternative “roles” for the model, enabling it to ignore safety restrictions. Well-known cases include users creating alternate personas that willingly generate harmful or disallowed content.

These vulnerabilities are no longer hypothetical. From corporate chatbots misinforming customers about refund policies, to AI assistants being tricked into revealing confidential documents, the risks are concrete — and carry legal, financial, and reputational consequences.

When AI Itself Produces Harm

An even deeper concern is that AI is evolving from a passive amplifier of falsehoods into an active source of risk. Security researchers have documented cases where AI-generated outputs hid malicious code inside images or documents, effectively transforming generative systems into producers of malware.

This raises the stakes: organizations must now defend not only against external hackers, but also against the unintended capabilities of the tools they deploy.

The Security Industry Responds

In response, a growing ecosystem of AI security firms and research groups is emerging. Their focus is on:

Monitoring AI input and output to detect manipulative prompts.

Identifying disinformation campaigns that exploit algorithmic trust.

Running “red team” exercises, where experts deliberately attack models to expose vulnerabilities.

High-profile cases — including “zero-click” exploits that extract sensitive data from enterprise AI assistants without user interaction — have underlined that the danger is not theoretical. The arms race between attackers and defenders is already underway.

A Technological Arms Race

The broader picture is one of a technological arms race. On one side are malicious actors — state-sponsored propagandists, cybercriminals, and opportunistic marketers. On the other are AI developers, security firms, regulators, and end users who must remain vigilant.

What makes this race unique is the dual nature of AI: it is both a target for manipulation and a vector for influence. As LLMs become embedded in daily decision-making — from search results to business operations — the stakes for truth, trust, and security are rising exponentially.